AI SDR vs Human-Reviewed Outreach: What Actually Gets Replies in 2026

I get about 10 cold emails a day now. The volume's gone up noticeably in the last year. And I can tell you exactly when someone's using an AI SDR tool because the emails all read the same way.

First name. Company name. A pain point I don't actually have. An aggressive call to action. No evidence that anyone, human or machine, looked at what my company does before hitting send.

I got one last week that told me I was "struggling to scale my sales team." We're two people. We don't have a sales team. That's the kind of thing that happens when nobody checks what the AI wrote before it goes out.

This isn't an anti-AI piece. We use AI for almost everything in our own outreach. This is about a specific question that more founders are asking right now: should you hand your outreach to an autonomous AI SDR, or should you keep a human reviewing what goes out?

The AI SDR promise vs what actually happens

The pitch from autonomous AI SDR tools is compelling. Plug in your ICP, connect your inbox, and let the AI find leads, write emails, and send on your behalf. No human involvement. Some promise to handle replies too.

I get why founders are drawn to it. When you're doing everything yourself, the idea of handing outreach to a machine and focusing on delivery sounds incredible. I almost went that route before we decided to build Scout.

But the numbers are rough.

AI SDR platforms are seeing 50 to 70 percent annual churn. That means most users who sign up leave within a year. For context, that's higher than the turnover rate of the human SDRs these tools are supposed to replace. Think about that for a second.

Why are people leaving? Because the output reads like AI. And in 2026, everyone can spot it.

What "reads like AI" actually means

It's not bad grammar or robotic language. Modern AI writes fluently. Sometimes too fluently, that's part of the problem.

The tell is the absence of anything specific. I saved a bunch of the AI-generated cold emails I received over a couple of weeks and the patterns were identical across different senders using different tools.

They all opened with my name and company but nothing specific about what we do. They all referenced a pain point that sounded plausible but was clearly generated from a template. Same structure every time: compliment, problem statement, solution pitch, calendar link.

When an email could be sent to 10,000 companies without changing a word beyond the name and company fields, the recipient knows. It feels like a mail merge with extra steps. Because that's exactly what it is.

Gmail and Microsoft have caught on too. Both have deployed AI-specific filtering designed to detect automated outreach. Average B2B cold email reply rates dropped from 6.8 percent in 2023 to around 4 to 5 percent in 2025 as AI-generated volume increased. The volume advantage that autonomous tools rely on is running headlong into deliverability systems built to stop them.

The part everyone skips

Here's what I think most people miss. The quality of a cold email isn't a writing problem. It's a research problem. The email is decided before a single word gets written, by how well you understand the company and why they should care about hearing from you right now.

Most autonomous AI SDR tools skip this or shortcut it badly. They pull basic firmographic data, company name, industry, headcount, maybe a job title, and generate an email from that. The result is a perfectly structured, grammatically correct email that says absolutely nothing about the recipient's actual situation.

We learned this the hard way when we were building out our own outreach process. The first version of our pipeline basically did the same thing, pulled some basic data and wrote from that. The emails were fine. Technically correct, well-written, completely ignorable. Nobody replied because nobody could tell we'd actually looked at their company.

Once we rebuilt the process so the AI does real research first, visits the website, checks hiring signals, looks for trigger events, maps pain points, everything changed. Not because the writing got better but because the writing had something real to say.

The research is the product. The email is just the delivery mechanism.

What the data says about keeping humans involved

One controlled study compared fully autonomous AI outreach against AI-plus-human hybrid outreach. The autonomous approach booked more meetings. But the hybrid approach generated 2.3x more revenue from fewer meetings.

Fewer meetings, more money. I keep coming back to that.

It makes sense when you think about it. Autonomous tools optimise for volume. More emails, more opens, more meetings. But meeting quality compounds in a way that meeting volume doesn't. A meeting with someone who was genuinely targeted and researched converts differently than a meeting from a spray campaign. Anyone who's sat through a demo call where the prospect clearly had no idea why they booked it knows this feeling.

Separate research found that companies using AI to augment rather than replace human SDRs see 2.8x more pipeline than those going fully autonomous. The pattern's consistent across the data I've seen. Human involvement produces better commercial outcomes even though it's slower.

How we actually do it

When I say human-reviewed outreach I don't mean writing every email from scratch. That doesn't scale and honestly it's what most founders are already doing, badly, at 10pm after a full day of client work. I've been there. It's miserable.

What I mean is AI handles the time-consuming parts and a human handles the judgment parts.

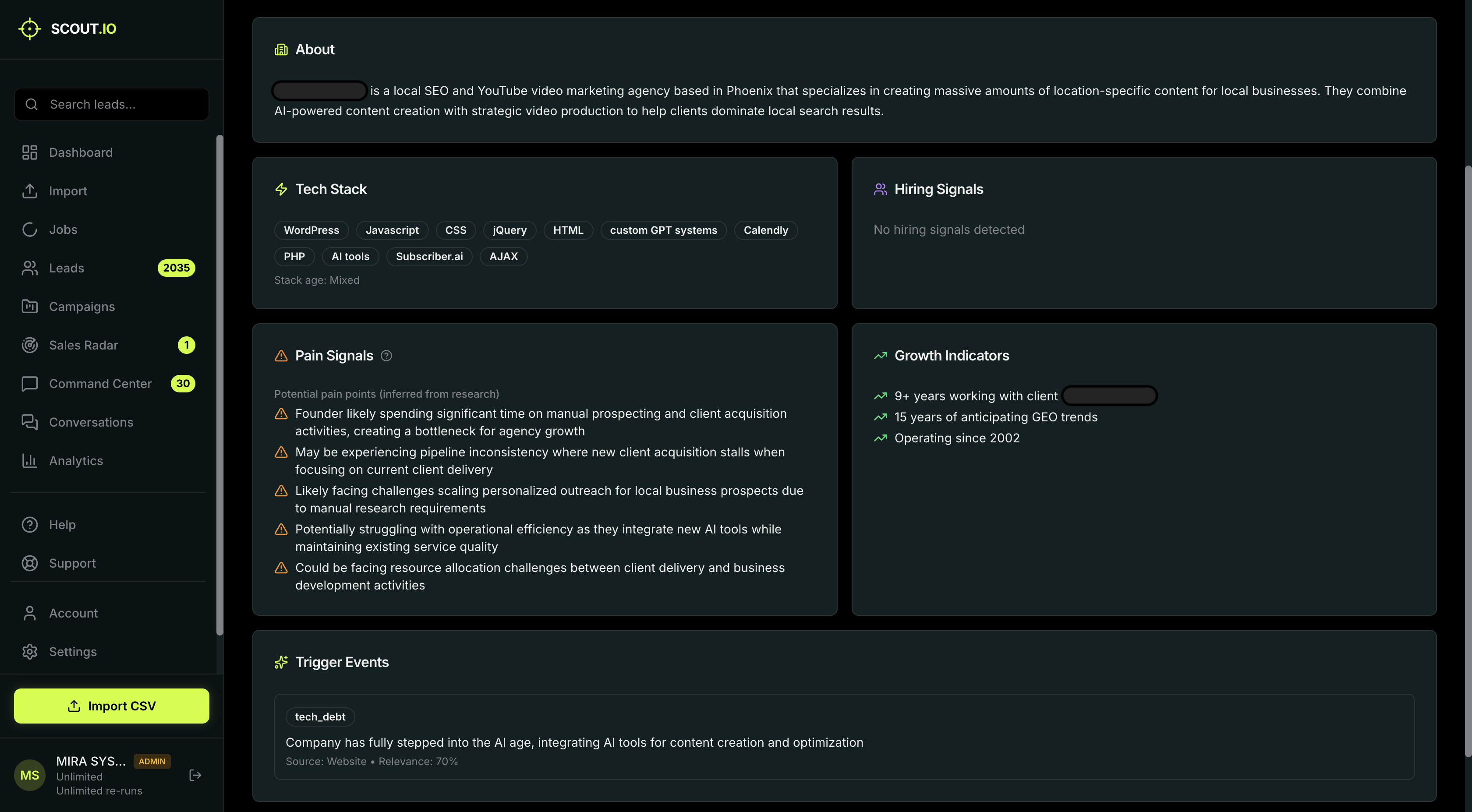

In our process, Scout researches each company. Visits the site, searches the web, pulls together hiring signals, pain points, growth indicators, trigger events. Scores every lead on fit, intent, and timing. Builds a strategy and writes three message variants with different angles.

Then I look at it.

Sometimes the AI nails it and I approve without changes. Sometimes it picked the wrong angle and I switch to a different variant. Last week it wrote a great email for a recruitment agency but led with a time-saving angle when I could see from their LinkedIn that they were posting about outreach quality constantly. I switched the angle to quality. Got a reply the next morning.

Sometimes the research surfaces something the AI didn't use in the email, so I add it myself. Sometimes the tone's just off for a particular prospect.

The point is nothing goes out that I haven't personally reviewed. That's not a limitation. It's the whole point.

Autonomous tools can't tell you when they fail

This is the part that actually worries me.

When an autonomous AI SDR sends a bad email, nobody catches it. The email goes out. The recipient ignores it or marks it as spam. The tool counts it as a sent email and moves on. There's no feedback loop that flags "this email referenced a pain point the company doesn't have" or "this opening line is identical to the last 50 you sent."

We built a feature recently that scores the confidence of every email variant. High, medium, or needs review. It tells me which ones to rubber-stamp and which ones to spend time on. An autonomous tool would never build that feature because the entire value proposition is that you don't need to look at the emails. That's a fundamentally different design philosophy.

With human review you catch the email that says "I noticed you're expanding" when the company's actually laying people off. You catch the variant that sounds like every other AI email in their inbox. You notice when the AI defaults to a generic angle because the research didn't find anything strong.

That quality control doesn't show up in send volume metrics. It shows up in reply rates and whether those replies turn into actual conversations.

The honest downsides

I'm not going to pretend this is perfect.

It's slower. Reviewing 50 emails takes time. Not as much as writing them from scratch, but more than clicking send all on an autonomous tool.

It requires judgment. If you approve everything without actually reading it, you've added a step without adding value. The review has to be real. Some days I'm tired and I catch myself skimming. Those are the days I know the quality dips.

It limits scale. One person can meaningfully review maybe 25 to 50 emails a day. For a founder at a small company, that's enough for a healthy pipeline. It's not enterprise volume. If you need to send 10,000 emails a month, this isn't your approach.

For the founders we built this for, people at companies with 1 to 20 employees doing their own outreach, those limitations are actually fine. They don't need 10,000 emails. They need 200 good ones.

Where I think this goes

I don't think AI will always need a human checking every email. The technology will improve. Models will get better at research, at understanding context, at knowing when something's off.

But right now, in 2026, it's not there. And the tools pretending it is are burning their users' domains and reputations. The churn rate isn't because users are impatient. It's because the output isn't good enough to justify removing the human.

We're building for where the technology actually is today. AI does the research and heavy lifting. The human makes the final call. As models improve, we'll let the AI handle more. But the human stays in the loop until the output's genuinely good enough that removing them doesn't hurt the results.

I'd rather build something I never have to walk back than promise something the technology can't deliver yet.

FAQ

What is an AI SDR?

An AI SDR is software that replaces human sales development reps. These tools autonomously find leads, write emails, and send them without human involvement. The pitch is fully automated outreach at scale with zero human effort.

Why are AI SDR tools churning users so fast?

50 to 70 percent annual churn, mostly because the output quality disappoints. Emails read as generic and templated, reply rates are low, and meetings that do get booked convert poorly because the outreach wasn't targeted. Users expect autonomous pipeline generation and leave when the output needs heavy human oversight anyway.

Is AI outreach just spam?

Not automatically. AI that does genuine research on a company before writing can produce highly relevant outreach. The problem is when AI skips research and generates from basic data. That output is functionally spam regardless of how polished the sentences are.

What is human-reviewed outreach?

AI handles research, scoring, strategy, and first drafts. A human reviews, edits, and approves every message before it sends. Nothing goes out without sign-off. You keep the speed of AI with the quality control of human judgment.

Can human-reviewed outreach scale?

One person can review 25 to 50 emails per day. For small company founders doing their own outreach, that's more than enough. For larger teams the model shifts to humans reviewing templates and samples rather than every message.

How do I know if my outreach tool is actually working?

Ignore send volume and open rates. Track reply rate, reply sentiment, and reply-to-meeting conversion. High opens with low replies means your emails reach inboxes but don't resonate. Replies that are mostly "unsubscribe me" mean targeting or messaging is wrong. The number that matters is how many sends it takes to start a qualified conversation.

What should I look for in an outreach tool that keeps humans in the loop?

Visible research, can you see what the AI found before it wrote? Scoring that explains its reasoning. Multiple message variants so you have real choices. And a review step that's required, not optional. If the tool lets you skip review entirely, the human-in-the-loop claim is marketing, not architecture.

Written by Connor Heyward-Fox, Co-founder at Scout.io. We're building an outreach tool where AI does the research and the human makes the final call. If that sounds like what you've been looking for, scout.io is free to try.

Try Scout free

5 leads per month, no credit card required. See what AI-powered outreach actually looks like.

Join the waitlist